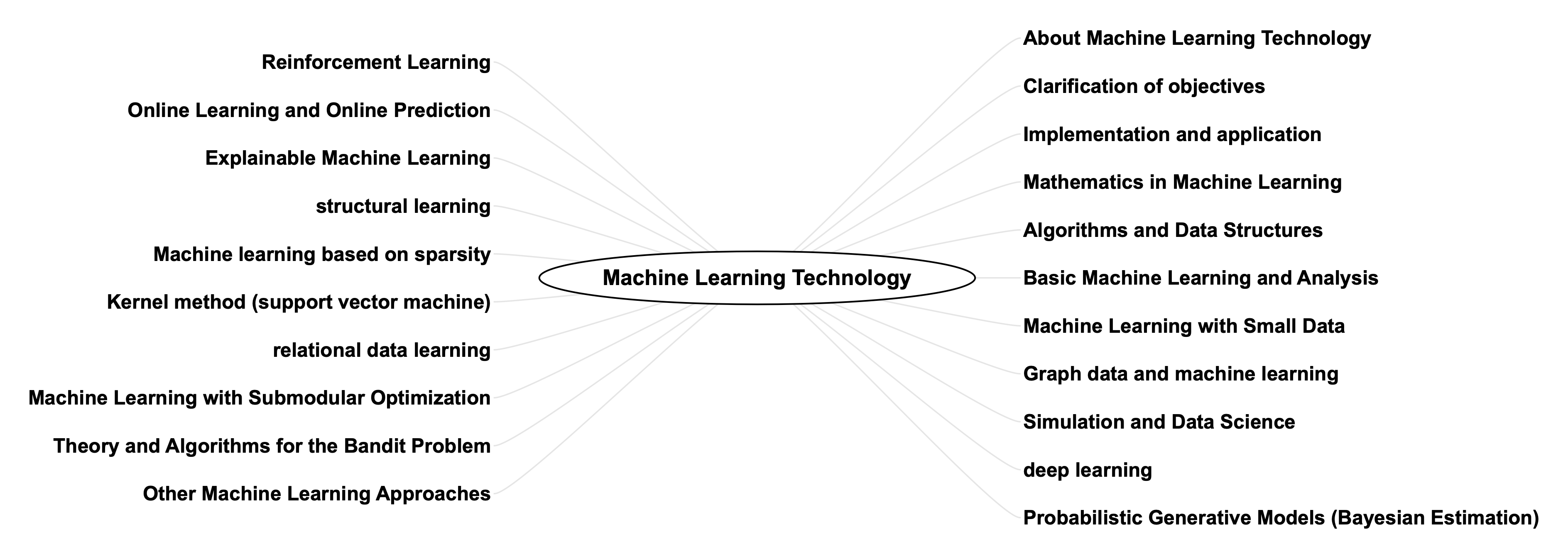

This blog discusses the information in the areas shown in the map below for this machine learning technology.

The details are described below.

About Machine Learning Technology

Machine learning is the process of learning a large amount of training data and finding patterns and rules in it, using the computational capabilities of a computer. This is not simply memorizing the training data as it is, but rather clarifying (abstracting) the model (patterns and rules) behind the training data so that it can make judgments when data other than the training data is input, a process known as generalization.

This article provides an introduction to this machine learning technique.

Problem setting and quantification

In order to perform machine learning, it is necessary to determine the nature of the issue and quantify it as a target value using various problem-solving frameworks such as PDCA, KPI, and KGI. In addition, when the problem is not yet clarified, it is necessary to formulate various hypotheses using deductive, inductive, projective, analogical, abductive, and other non-deductive methods, to verify the hypotheses formed without falling into the “confirmation bypass,” and to devise ways to quantify them using methods such as Fermi estimation.

In t he following pages of this blog, we describe these problem-solving methods, thinking methods, and experimental designs in detail.

Implementation and application

For implementations of the machine learning, see Programming Techniques in Clojure and Functional Programming, Pyhton and Machine Learning, C_C++ and various machine learning algorithms, R Language and Machine Learning[/su_ button].

In addition, hardware technology, natural language processing technology, knowledge Data and its use, semantic web technology, ontology technology, Chatbot technology, Agent technology, User interface technology, etc., which is the basis for machine learning artificial intelligence technologies.

And for PFs that utilize them, see ICT Technology in IT Infrastructure Technology, Web Technologies, Microservices and Multi-Agent Systems , Database Technology, search technology, etc.

Mathematics in Machine Learning

Machine learning is a technology built on mathematical theories and methods, and machine learning algorithms are those that use mathematical models to analyze data and discover patterns. Mathematics is the study of concepts such as numbers, quantities, structure, space, change, and uncertainty that are handled, logically reasoned about, and modeled. The basic fields of mathematics include arithmetic, which deals with basic calculations; algebra, which deals with mathematical expressions and equations; geometry, which deals with shapes, sizes, and positions in space; and analysis, which deals with limits and calculus. In addition to these fields, there are various application areas such as probability theory, statistics, mathematical logic, mathematical optimization, and topology.

In the following pages of this blog, I will discusses a variety of topics for these mathematics. In particular, this blog focuses on information that is considered necessary for artificial intelligence, machine learning, and other computer applications.

Algorithms and Data Structures

Algorithms and data structures are fundamental concepts in computer science and are closely related. An algorithm represents a process that takes in input, processes it in some procedure, and finally returns an output, which can also be called a procedure that clearly defines the steps or method of solving a certain problem. Data structures refer to methods for efficiently storing, retrieving, and manipulating data.

Specific algorithms include basic algorithms such as sorting, searching, pattern recognition, databasing, error correction, and encryption, as well as simulation algorithms such as Markov chain Monte Carlo methods. The appropriate algorithm from among these should be used to efficiently manipulate the data structure.

In the following pages of this blog discuss the following topics related to this algorithm.

Basic Machine Learning and Data Analysis

The following pages of this blog summarize some of the tasks in machine learning. The basic algorithms are regression, which finds a function to predict a continuous output from an input; classification, which is a model that restricts the output to a finite number of symbols; clustering, which is the task of dividing the input data into K sets according to some criterion; and We will discuss clustering, which is the task of dividing input data into K sets according to some criteria, linear dimensionality reduction, which is used to process high-dimensional data commonly found in real systems, and sequence pattern extraction, which is used to learn sequence patterns such as genes and workflows. We will discuss.

Noise Removal, Data Cleansing, and Interpolation of Missing Values in Machine Learning

Noise removal and data cleansing and missing value interpolation in machine learning are important processes for improving data quality and the performance of predictive models.

Noise reduction is a technique for removing unwanted information or random errors in data. Noise can be caused by a variety of factors, including sensor noise, measurement error, and data entry errors. Noise can negatively impact the training and prediction of machine learning models, and the goal of noise removal is to obtain reliable data and improve model performance. Data cleansing, or interpolation of missing values, is the process of cleaning a dataset to resolve issues such as inaccuracies, incompleteness, duplicates, and missing values.

The following pages of this blog provide a variety of information about noise removal, data cleansing, and missing value interpolation in this machine learning process, including example implementations.Parallel and Distributed Processing in Machine Learning

The learning process of machine learning requires high-speed parallel distributed processing to handle large amounts of data. Parallel distributed processing distributes processing among multiple computers and performs multiple processes at the same time, enabling high-speed processing.

The following pages of this blog describe specific implementations of these parallel and distributed processing techniques.

Machine learning with small data

Small data refers to a data set with a limited number of samples. Small data provides a more challenging machine learning problem because the amount of data that can be used to train a model is smaller than with a large amount of data. Machine learning with small data can achieve higher accuracy in the absence of sufficient training data using approaches such as (1) data expansion, (2) transition learning, (3) model simplification, and (4) cross-validation.

The following pages of this blog discuss various approaches to machine learning of this small data.

Graph Data Algorithm and Machine Learning

Graphs are a way of expressing connections between objects such as objects and states. Since many problems can be attributed to graphs, many algorithms have been proposed for graphs.

In the following pages of this blog, we discuss the basic algorithms for graph data, such as the search algorithm, shortest path algorithm, minimum global tree algorithm, data flow algorithm, DAG based on strongly connected component decomposition, SAT, LCA, decision tree, etc., and applications such as knowledge data processing and Bayesian processing based on graph structures.

Graph Neural Networks

Graph neural networks (GNNs) apply deep learning to graph data, extracting features from the data, constructing neural networks based on the feature representations, and connecting them in multiple stages to capture complex data patterns and construct models with nonlinearities. The following is a comparison of the two types of neural networks.

The difference between ordinary deep learning and graph neural networks is that ordinary deep learning uses a grid-like data structure of image data, text data, etc., and calculates them based on matrix operation algorithms, while GNN specializes in graph structures and calculates data represented by a combination of nodes and edges GNN specializes in graph structures and computes data represented by a combination of nodes and edges.

In the following pages of this blog, the algorithm, implementation examples, and various application examples of GNN are described.

Simulation, Data Science and Artificial Intelligence

Large-scale computer simulations have become an effective tool in a variety of fields, from astronomy, meteorology, physical properties, and biology to epidemics and urban growth, but only a limited number of simulations can be performed purely based on fundamental laws (first principles). Therefore, the power of data science is needed to set the parameter values and initial values that are the preconditions for the calculations. However, modern data science is even more deeply intertwined with simulation.

The following pages of this blog discuss these simulations, data science, and artificial intelligence.

Deep Learning

Deep learning is a machine learning technology that uses a mathematical model called a neural network, which imitates the state in which nerve cells in the brain are interconnected in multiple layers through connections called synapses. Since neural networks can construct models with many parameters, they can solve complex problems in a wide range of fields such as image recognition, speech recognition, and natural language processing, and can realize highly accurate predictions and judgments.

Deep learning is characterized by its ability to automatically extract features through learning using large amounts of data. Therefore, there is no need to design features manually, and it can be highly versatile compared to conventional machine learning algorithms. In addition, tools in python such as tensorflow/Keras and pythorch are available, and model construction/learning can be achieved relatively easily by using them.

The following pages of this blog summarize the theory of these deep learning techniques and their implementation in various fields such as image recognition, speech recognition, and natural language processing, especially the use of tools in python such as tensorflow/Keras and pythorch.

Automatic Generation by Machine Learning

Automatic generation by machine learning involves a computer learning patterns and regularities in data and generating new data based on them. There are various approaches to automatic generation, including deep learning, probabilistic, and simulation approaches.

In the following pages of this blog, we describe various approaches and concrete implementations of machine learning-based automatic generation techniques.

Reinforcement Learning

Reinforcement learning is a type of machine learning that deals with the problem of an agent in an environment observing its current state and deciding what action to take. The agent obtains rewards from the environment by selecting actions. Reinforcement learning learns the policy that will yield the most rewards through a series of actions. The environment is formulated as a Markov decision process. TD learning and Q-learning are well known as representative methods.

In the following pages of this blog, we describe the theory, algorithms, and various implementations in python and other languages related to reinforcement learning.

Online Learning/OnlinePrediction

Online learning is a learning method in which the model is improved sequentially using only the given data each time one data (or a part of all data) is given, without using all the data at once. Due to the nature of this data processing method, it can be applied to data analysis on a scale that does not allow all data to be stored in memory or cache, or to learning in an environment where data is generated permanently.

Reinforcement learning and online prediction are frameworks to handle various decision-making problems by performing meta-mechanical learning using this sequential learning.

In the following pages of this blog, we provide a theoretical overview of online learning, reinforcement learning, and online prediction, as well as various implementations and applications.

Probabilistic approaches in machine learning

Probabilistic generative models can be a method to model the distribution of data and generate new data. In learning using a probabilistic generative model, a probability distribution such as Gaussian, Beta, or Dirichlet distribution is first assumed to model the distribution of the data, and then parameters to generate new data from the distribution are learned using algorithms such as variational and MCMC methods using methods such as maximum likelihood estimation and Bayesian estimation. The probabilistic generative model is supervised and learned.

Probabilistic generative models are used for unsupervised as well as supervised learning. In supervised learning, probabilistic generative models can be used to model the distribution of data and generate new data using the model. In unsupervised learning, the distribution of the data can be modeled to estimate latent variables. For example, in a clustering problem, data can be divided into multiple clusters.

Typical probabilistic generative models include topic models (LDA), hidden Markov models (HMM), Boltzmann machines (BM), autoencoders (AE), variational autoencoders (VAE), generative adversarial networks (GAN), etc.

These methods can be applied to natural language processing as represented by topic models, speech recognition using hidden Markov models, sensor analysis, and analysis of various statistical information including geographic information.

In the following pages of this blog, we discuss Bayesian modeling, topic modeling, and various applications and implementations using these probabilistic generative models.

Machine Learning with Bayesian Inference and Graphical Model

Machine learning using Bayesian inference is a statistical learning method that calculates the posterior probability distribution for an unknown variable given observed data according to Bayes’ theorem, the fundamental law of probability, and then calculates estimators for the unknown variable and predictive distributions for new data to be observed in the future based on the obtained posterior probability distribution.

In the following pages of this blog, we describe the basic theory, implementation, and graphical model approach to this machine learning technique based on Bayesian inference.

Nonparametric Bayesian and Gaussian Processes

Nonparametric Bayesian models, in a nutshell, are stochastic models in “infinite dimensional” space, as well as modern search algorithms, such as the Markov chain Monte Carlo method, that can efficiently compute them. Its applications include clustering with flexible generative models, structural change estimation with statistical models, and applications to factor analysis and sparse modeling.

The Gaussian process takes the probabilistic approach a step further, making the choice of the establishment distribution function f(x) flexible and allowing “any function with a certain degree of smoothness (Gaussian process regression)” to be used to obtain the probability distribution of the parameters of these functions by Bayesian estimation. A Gaussian process can be thought of as a box that, when shaken, produces the function f(), and by fitting this box to real data, a cloud of posterior functions can be obtained.

The following pages of this blog describe the theory and implementation of this nonparametric Bayesian model and Gaussian process.

Explainable Machine Learning

Explainable machine learning refers to the ability to present the output of a machine learning algorithm in a form that allows the user to explain the reasons and rationale for the results.

Current technical trends in explainable machine learning are dominated by two approaches: (A) interpretation by interpretable machine learning models, and (B) post-interpretation models (model-independent interpretation methods).

In the following pages of this blog, we discuss the various approaches in this explainable machine learning technique.

Recommended Technology

Recommendation technology using machine learning analyzes the user’s past behavior history, preference data, and other data to provide better personalized recommendations based on that data. The recommendation technology consists of the following flow. (1) create a user profile, (2) extract features of items, (3) train a machine learning model, and (4) generate recommendations from the created model.

In this blog, specific implementation and theory of this recommendation technology are described in the following pages.

Analysis of Time Series Data

Time-series data is called data whose values change over time, such as stock prices, temperatures, and traffic volumes. By applying machine learning to this time-series data, a large amount of data can be learned and used for business decision making and risk management by making predictions on unknown data.

Typical methods used include ARIMA (autoregressive sum moving average model), LSTM (long short-term memory model), Prophet (a library developed by Facebook that specializes in forecasting time-series data), and state-space models. These methods are machine learning-based forecasting techniques that learn from past time-series data to predict the future.

In the following pages of this blog, we discuss theoretical overview, specific algorithms, and various applications of this time series data analysis.

Anomaly detection and change detection

Abnormality detection by machine learning is a technology aimed at detecting situations that differ significantly from the normal situation, while change detection will be a technology aimed at detecting changes from one situation to another.

They can be used for the purpose of detecting any kind of abnormal behavior, for example, manufacturing line failures, network attacks, financial transaction fraud, etc., or for detecting temporal changes in sensor data, image data, etc.

In the following pages of this blog, we discuss various approaches to anomaly and change detection, starting with Hotelling’s T2 method, and including Bayesian methods, nearest neighbor methods, mixed distribution models, support vector machines, Gaussian process regression, and sparse structure learning.

Structural Learning

Learning the structure that data has is important for the interpretation of what that data is. The simplest form of structure learning is hierarchical clustering, which is the basic machine learning algorithm for learning with decision trees. There are also relational data learning, which learns relationships between data, graph structure learning, sparse structure learning, etc.

In the following pages of this blog discuss these structural learning methods.

Machine Learning Based on Sparsity

Sparsity-based machine learning is a machine learning method that uses sparsity to perform tasks such as feature selection and dimensionality reduction of high-dimensional data.

Sparsity here refers to the property that most of the elements in the data have values close to zero and only a few elements have non-zero values, and is extracted using models such as linear regression and logistic regression with L1 regularization to perform feature selection and dimensionality reduction. Because machine learning based on sparsity can thus improve interpretability for high-dimensional data, it is widely used to analyze data and build predictive models in various fields such as sensor data processing, image processing, and natural language processing.

In the following pages of this blog, we discuss theoretical explanations, concrete implementations, and various applications of machine learning based on sparsity.

Overview of Kernel Methods and Support Vector Machines

The kernel method is a technique used in machine learning to handle nonlinear relationships. It measures the similarity between data using a function called a kernel function, and evaluates the similarity between two sets of data by calculating the inner product between features of the input data. Kernel methods are mainly used in algorithms such as support vector machines (SVM), kernel principal component analysis (KPCA), or Gaussian processes (GP).

In the following pages of this blog, we will discuss an overview of this kernel method, mainly the theory related to support vector machines, concrete implementation, and various applications.

Relational Data Learning

Relational data is data that represents what kind of “relationship” exists for any given pair of N objects. Considering a matrix as a form of representing this relational data, the relationships are the matrix elements themselves, and relational data learning can be said to be learning to extract patterns in this matrix data.

There are two types of tasks to which these are applied: “prediction” and “knowledge extraction.

Prediction is the problem of estimating the value of unobserved data using statistical models learned and designed from observed data, and knowledge extraction is the task of extracting information that leads to some useful knowledge, rules, or knowledge by analyzing the characteristics of the related data itself or by modeling the given observed data appropriately. The knowledge extraction problem is a task to extract some useful knowledge, rule, or information that leads to knowledge by analyzing the characteristics of the relational data itself or by appropriately modeling given observed data.

In following pages of this blog, we will provide a theoretical overview, specific algorithms, and various applications of relational data learning.

Topic Model Theory and Implementation

A topic model is a probabilistic generative model for extracting potential topics from a set of documents and understanding the content of documents. By using a topic model, it is possible to estimate what topics are covered in a certain document, and when applied to large-scale text data analysis, for example, it is possible to understand what topics are covered in a large number of news articles and blog posts, and what trends are observed. The typical example of a topic model is the “topic model”.

Typical examples of topic models include Latent Dirichlet Allocation (LDA) and Probabilistic Latent Semantic Analysis (PLSA). These models extract latent topics by estimating topic distribution and word distribution based on the frequency of occurrence of the words that make up a document. Topic models are also applied not only to text analysis, but also to music, images, videos, and other fields.

The topic model can be used for various applications such as (1) news article analysis, (2) social media analysis, (3) recommendation, (4) image classification, and (5) music genre classification.

In the following pages of this blog, I discuss the basic theory and various applications of this topic model.

Causal Inference and Causal Search Techniques

Various machine learning approaches have been used to derive “correlations” from a vast amount of data. In contrast, deriving “causal relationships,” which are relationships between causes and effects, rather than mere “correlations,” is expected to be applied to various situations in the medical and manufacturing industries, but requires a statistical approach that is different from general machine learning approaches.

The “causal inference” and “causal search” techniques target “causal relationships” that are not such “correlations. Although both “causal inference” and “causal search” are methods for analyzing causal relationships, there is a difference in purpose and approach: “causal inference” is a technique for identifying/verifying causal relationships, while “causal search” is a technique for discovering causal relationships.

In the following pages of this blog, we discuss in detail the theory, implementation, and various applications of this causal inference/exploration.

Submodular Optimization and Machine Learning

Submodular functions are a concept corresponding to convex functions on discrete variables and are an important concept in combinatorial optimization problems. Combinatorial” refers to the procedure of “selecting a part of some selectable collection” and various computational properties associated with it.

Submodular functions are used to find efficient solutions to optimization problems such as minimization and maximization by using these properties, and have applications in a wide range of fields including information theory, machine learning, economics, and social sciences. Examples include social network analysis, image segmentation, search result ranking and query optimization, sensor networks, ad placement problems, and scheduling and power/transportation network optimization.

In the following pages of this blog, we describe the theory and implementation of this machine learning approach to submodular optimization.

Theory and Algorithms for the Bandit Problem

The bandit problem is a type of reinforcement learning in the field of machine learning, in which the agent must decide which arm to choose among multiple alternatives (arms). Each arm generates a reward according to a certain probability distribution, which is unknown to the agent, and the agent finds which arm is the most rewarding by drawing the arm several times and receiving the reward. Such a bandit problem is solved under the following various assumptions. (1) the agent selects each arm independently, (2) each arm generates rewards according to some probability distribution, (3) the rewards of the arms are observable to the agent, but the probability distribution is unknown, and (4) the agent receives rewards by drawing an arm several times.

In the bandit problem, the agent also decides which arm to select, and learns a strategy for selecting the arm with the maximum reward using the following algorithms. (1) ε-greedy method (randomly select an arm with constant probability ε, and select the arm with the highest reward with the remaining probability 1-ε), (2) UCB algorithm (aims to increase the upper bound of the reward by preferentially selecting the most uncertain arm), and (3) Thompson extraction method (posterior distribution of the probability distribution of the arm from which to sample the next arm to be selected).

The Bandit Problem is also applied to real-world problems, for example, in website ad serving and medical treatment selection. The following pages of this blog discuss the theory and various algorithms for the bandit problem.

Other Machine Learning

In this article, we will discuss Topological Data Analysis.

Topological data analysis is a method of analyzing a set of data using a “soft” geometry called topology. Machine learning is an operation to find a model that fits the data well given the data, and a model is a space expressed in terms of some parameters. Given that a model is a space represented by some parameters, the essence of machine learning is to find a projection (function) from the data points to the space of the model.

Topology, on the other hand, is like the oft-used coffee cup and doughnut example. Suppose a coffee cup is made of an unbreakable clay-like material, and if we deform it little by little, we can eventually transform it into a doughnut.

- Semantic ML for Manufacturing Monitoring at Bosch

- Type-Constrained Representation Learning in Knowledge Graphs

- WebBrain: Joint Neural Learning of Large-Scale Commonsense Knowledge

- Learning Commonalities in SPARQL

- DWRank: Learning Concept Ranking for Ontology Search

- Completeness-aware Rule Learning from Knowledge Graphs

- Knowledge Graph Refinement: A Survey of Approaches and Evaluation Methods

- Rule Learning from Knowledge Graphs Guided by Embedding Models