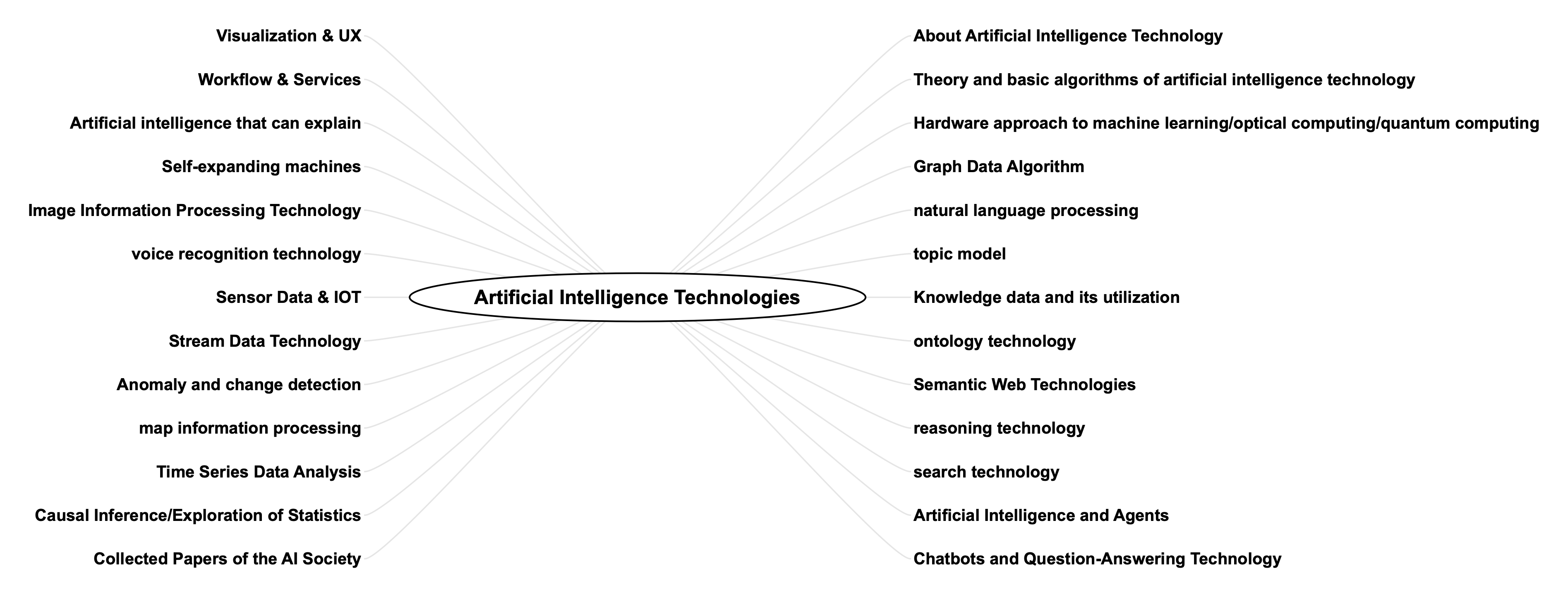

This section discusses information on artificial intelligence technology in the areas shown in the map below.

The details of each are described below.

About Artificial Intelligence Technology

Artificial intelligence can be defined as “a device (or software) artificially created to behave in a human-like manner. The term artificial intelligence was coined at the Dartmouth Conference in 1956. Early artificial intelligence was not good at large-scale calculations that consumed large amounts of memory and CPU power, as is the case with today’s machine learning, due in part to a significant lack of hardware power. The Dartmouth Conference was held in 1964, 70 years after the Dartmouth Conference. Nearly 70 years have passed since the Dartmouth Conference, and the scope of artificial intelligence technology is very broad.

In the following pages of this blog discuss some of these artificial intelligence technologies, especially those other than machine learning technologies.

Theory and basic algorithms of artificial intelligence technology

Other than machine learning, artificial intelligence technologies are applied to “inference” and “search” and other logical approaches to information.

In the following pages of this blog, we will discuss “What is Artificial Intelligence Technology,” “Information Theory & Computer Engineering,” “Basic Algorithms,” “Logic,” “Sphere Theory,” “Dynamic Programming,” “Genetic Algorithm and Particle Swarm Optimisation (PSO),” etc. as theories and basic algorithms of artificial intelligence technology. Optimisation (PSO), etc.

Graph Data Algorithm

Graphs are a way of expressing connections between objects such as objects and states. Since many problems can be attributed to graphs, many algorithms have been proposed for graphs.

In the following pages of this blog, we discuss the basic algorithms for graph data, such as the search algorithm, shortest path algorithm, minimum global tree algorithm, data flow algorithm, DAG based on strongly connected component decomposition, SAT, LCA, decision tree, etc., and applications such as knowledge data processing and Bayesian processing based on graph structures.

Automata and state transitions/Petri nets, automatic planning and counting problems

Automata theory is a branch of computational theory and one of the most important theories in computer science. By studying abstract computer models such as finite state machines (FSMs), pushdown automata, and Turing machines, automata theory is applied to solve problems in formal languages, formal grammars, computability, computability, and natural language processing.

A finite state machine (FSM) is a type of computer that transitions states with respect to an input sequence. diagram to change the current state upon receiving an input and generate the appropriate output.

Petri nets are a type of automata theory and can be one of the graphical modeling languages used for modeling concurrent systems. Petri nets were invented by Carl Adam Petri in 1962 and are widely used in fields such as industrial control and software engineering.

Automated Planning is a technique for automatically generating a sequence of actions to reach a certain goal state. Automated planning has been studied in fields such as computer science, artificial intelligence, and robotics.

In the following pages of this blog, we provide various information on automata, state transitions/Petri nets, and automated planning, including theory, concrete examples of use, and implementation.

Hardware approaches to machine learning – FPGA, optical computing, quantum computing

Currently, most computers use electricity to represent and calculate these numbers for ease of control, but a variety of media other than electricity have been used so far, including steam, light, magnetism, and quantum, each with its own characteristics.

In the following pages of this blog, after describing the basic principles of computers starting from how computers work on the following pages, we will focus on machine learning as the target of computation and describe machine learning with the simplest machine, Raspberry Pi, and then describe the case of using FPGA or ASIC as a computer dedicated to machine learning. Next, we will discuss the case of using FPGAs and ASICs as a computer dedicated to machine learning. I will also discuss quantum computing and optical computers as next-generation computers.

Natural Language Processing Technology

Language is a tool used for communication between people. It is easy for humans to learn to speak, and does not require any special talent or long and steady training. However, it is almost impossible for non-humans to control language. Language becomes a very mysterious thing.

Natural language processing is the study of using computers to handle such language. Initially, natural language processing was realized by writing a series of rules that said, “This is what a language is like. However, language is extremely diverse, constantly changing, and can be interpreted differently by different people and contexts. It is not practical to write down all of them as rules, and statistical inference based on data, i.e., actual natural language data, has been the mainstream since the late 1990s, replacing rule-based natural language processing. Statistical natural language processing is, to put it crudely, solving problems based on a model of how words are actually used.

In the following pages of this blog, we will first discuss natural language from the perspective of “what natural language is”, (2) natural language from the viewpoint of philosophy, linguistics, and mathematics, (3) natural language processing technology in general, and the most important of these, (4) word similarity. (3) natural language processing techniques in general, and (4) word similarity, which is particularly important among them. Then, it describes (5) various tools for using them in computers and their specific programming (6) implementation, and provides information that can be used for real-world tasks.

Knowledge Data and its Utilization

The question of how to handle knowledge as information is a central issue in artificial intelligence technology, and has been examined in various ways since the invention of the computer.

Knowledge in this context is expressed in natural language, which is then converted into information that can be handled by computers and computed. How do we represent knowledge and how do we handle the represented knowledge? And how to extract knowledge from various data/information? In this blog, we will discuss these issues in the following pages.

In the following pages of this blog, we discuss the handling of such knowledge information, including the definition of knowledge, approaches using Semantic Web technologies and ontologies, predicate logic based on mathematical logic, logic programming using Prolog, etc., and solution set programming as an application of these approaches.

Ontology Technology

Ontology is, philosophically, the study of the nature of being and existence itself, and in the fields of information science and knowledge engineering, it is a formal model or framework for systematizing information and structuring data.

Ontologies make it possible to systematize information such as concepts, things, attributes, and relations, and to represent them in a sharable format. They are used to improve data consistency and interoperability among different systems and applications in diverse fields such as information retrieval, database design, knowledge management, natural language processing, artificial intelligence, and the Semantic Web. There are also domain-specific ontologies, which are also used to help share and integrate information in specific fields and industries.

In the following pages of this blog, we will discuss the use of this ontology from the perspective of information engineering.

Semantic Web Technology

Semantic Web technology is “a project to improve the convenience of the World Wide Web by developing standards and tools that make it possible to handle the meaning of Web pages,” and it will evolve Web technology from the current WWW “web of documents” to a “web of data.

The data handled there is not Data in the DIKW (Data Information Knowledge Wisdom) pyramid, but Information and Knowledge information, expressed in ontologies, RDF and other frameworks for expressing knowledge, and used in various DX and AI tasks.

In the following pages of this blog, I discuss about this Semantic Web technology, ontology technology, and conference papers such as information of ISWC (International Semantic Web Conference), which is the world’s leading conference on Semantic Web technology.

Reasoning Technology

There are two types of inference methods: deduction, which is the process of deriving a proposition from a set of statements or propositions, and non-deduction methods, which are induction, projection, analogy, and abduction. Inference can be basically defined as a method of tracing the relationships among various facts.

As algorithms for finding them, the classical approaches are forward and backward inference. Machine learning approaches include relational learning, rule inference using decision trees, sequential pattern mining, and probabilistic generation methods.

Inference technology is a technology that combines such various methods and algorithms to obtain the inference results desired by the user.

In the following pages of this blog, we will discuss classical reasoning as represented by expert systems, the use of satisfiability problems (SAT), solution set programming as logic programming, inductive logic programming, etc.

Artificial Life and Agent

Artificial life is life that has been designed and created by humans. It is a field that studies systems related to life (life processes and evolution) by using biochemistry, computer models and robots to simulate life. The term “artificial life” was coined in 1986 by Christopher Langton, an American theoretical biologist. Artificial life is a complement to biology in that it attempts to “reproduce” biological phenomena. Artificial Life is also sometimes referred to as ALife. Depending on the means, it is called “soft ALife” (software on computers), “hard ALife” (robots), or “wet ALife” (biochemistry).

In recent philosophical discussions, one of the kick-starters for the expression of intelligence is life, and from intention (the direction of life), meaning arises in relationships. (xx semantics) From this point of view, it is a reasonable approach to substitute the function of some kind of life with a machine (computer) and feed it back to the artificial intelligence system.

In the following pages of this blog ,I will discuss them from philosophical, mathematical, and artificial intelligence perspectives.

Chatbots and Question & Answer Technology

Chatbot technology can be used as a general-purpose user interface in a variety of business domains, and due to its diversity of business opportunities, it is an area in which many companies are now entering.

The question-and-answer technology that forms the basis of chatbots is more than just user interface technology. It is the culmination of advanced technologies that combine artificial intelligence technologies such as natural language processing and inference, and machine learning technologies such as deep learning, reinforcement learning, and online learning.

In the following pages of this blog, we discuss a variety of topics related to chatbots and question-and-answer technology, from their origins, to business aspects, to a technical overview including the latest approaches, to concrete, ready-to-use implementations.

User Interface and DataVisualization

Using a computer to process data is equivalent to creating value by visualizing the structure within the data. In addition, data itself can be interpreted in multiple ways from multiple perspectives, and in order to visualize them, we need a well-designed user interface.

In the following pages of this blog, I will discuss various examples of this user interface, mainly focusing on papers presented at conferences such as ISWC.

Workflow & Services

It summarizes information on service platforms, workflow analysis, and their application to real business applications published in ISWC and other publications.

In the following pages of this blog, we discuss service platforms using the Semantic Web for business domains such as healthcare, law, manufacturing, and science.

Autonomous artificial intelligence and self-expanding machines

Comming soon

Image Processing Technology

With the development of modern internet technology and smartphones, the web is filled with a vast amount of images. One of the technologies that can create new value from this vast amount of images is computer-aided image recognition technology. This image recognition technology requires not only knowledge of image-specific constraints, pattern recognition, and machine learning, but also exclusive knowledge of the target application. In addition, due to the recent artificial intelligence boom triggered by the success of deep learning, a huge number of research papers on image recognition have been published, and it has become difficult to follow them. Because the content of image recognition is so vast and enormous, it is difficult to overview the entire field and acquire knowledge without clear guidelines.

In the following pages of this blog, we have discussed the theories and algorithms for image information processing techniques, as well as specific applications of deep learning using python/Keras and approaches using sparse models and probability generation models.

Speech Recognition

One area where machine learning technology can be applied is in the area of signal processing. These are mainly one-dimensional data that changes on the time axis, such as various sensor data and voice signal processing. Various machine learning techniques, including deep learning, are used for speech signal recognition.

In the following pages of this blog, we will discuss speech recognition technology, starting from the mechanism of natural language and speech, AD transform, Fourier transform, and applications such as speaker adaptation, speaker recognition, and noise tolerance speech recognition using methods such as Dynamic Programming (DP), Hidden Markov Model (HMM), and deep learning.

Geospatial Information Processing

The term “geospatial information” refers to information about location or information that is linked to location. For example, it is said that 80% of the information handled by the government used for LOD is linked to some kind of location information, and in an extreme case, if the location where the information occurred is recorded together with the information, all information can be called “geospatial information.

By handling information in connection with location, it is possible to grasp the distribution of information even by simply plotting location information on a map. If you have data on roads and destinations linked to latitude and longitude, you can guide a person with a GPS device to a desired location, or track his or her movements. By taking the trajectory of how a person moves, it is possible to provide services based on location with information on past, present, and future events.

By making good use of these features of location information, it will be possible to make new scientific discoveries, develop services in business, and solve various social problems.

In the following pages of this blog, we will discuss how to use QGIS, a geospatial information platform, and how to combine it with R and various machine learning tools, as well as with Bayesian models.

Sensor Data and IOT

The use of sensor information is a central element of IOT technology. There are various types of sensor data, but here we will focus on one-dimensional, time-varying information.

There are two types of IOT approaches: one is to set up individual sensors for a specific measurement target and analyze the characteristics of the target in detail, and the other is to set up multiple sensors for multiple targets as described in “Application of Sparse Model to Anomaly Detection”, and select specific data from the obtained data to make decisions such as anomaly detection for a specific target.

In the following pages of this blog, we will discuss various IOT standards (WoT, etc.), statistical processing as time series data, stochastic refinement models such as hidden Markov models, sensor placement optimization by inferior modular optimization, control of hardware such as BLE, smart cities, and a wide range of other areas of knowledge.

Anomaly detection and change detection

In any business setting, it is extremely important to be able to detect changes or signs of anomalies. For example, by detecting changes in sales, we can quickly take the next step, or by detecting signs of abnormalities in a chemical plant in operation, we can prevent serious accidents from occurring. This will be very meaningful when considering digital transformation and artificial intelligence tasks.

In addition to extracting rules, it is now possible to build a practical anomaly and change detection system by using statistical machine learning techniques. This is a general-purpose method that uses the probability distribution p(x) of the possible values of the observed value x to describe the conditions for anomalies and changes in mathematical expressions.

In the following pages of this blog, I have described various approaches to anomaly and change detection, starting from Hotelling’s T2 method, Bayesian method, neighborhood method, mixture distribution model, support vector machine, Gaussian process regression, and sparse structure learning.

Stream Data Technology

This world is full of dynamic data, not static data. For example, a huge amount of dynamic data is formed in factories, plants, transportation, economy, social networks, and so on. In the case of factories and plants, a typical sensor on an oil production platform makes 10,000 observations per minute, peaking at 100,000 o/m. In the case of mobile data, a mobile user in Milan makes 20,000 calls/SMS/data connections per minute, and 20,000 connections per minute. In the case of mobile data, mobile users in Milan make 20,000 calls/sms/data connections per minute, reaching 20,000 connections per minute and 80,000 connections at peak times, and in the case of social networks, Facebook, for example, observed 3 million likes per minute as of May 2013. as of May 2013.

Use cases where these data appear include “What is the expected timing of failure when the turbine barring starts to vibrate in the last 10 minutes? What is the expected failure time when the turbine barring starts to vibrate, as detected in the last 10 minutes?” or “Is there public transportation where people are?” or “Who is discussing the top ten topics? These are just a few of the many granular issues that arise, and solutions to them are needed.

In the following pages of this blog, we discuss real-time distributed processing frameworks for handling such stream data, machine learning processing of time series data, and application examples such as smart cities and Industry 4.0 that utilize these frameworks.

Collected AI Conference Papers

In the following page in this blog, AI-related conference papers from AAAI, ISWC, ILP, RW, etc. were collected based on their proceedings.