About Machine Learning Technology

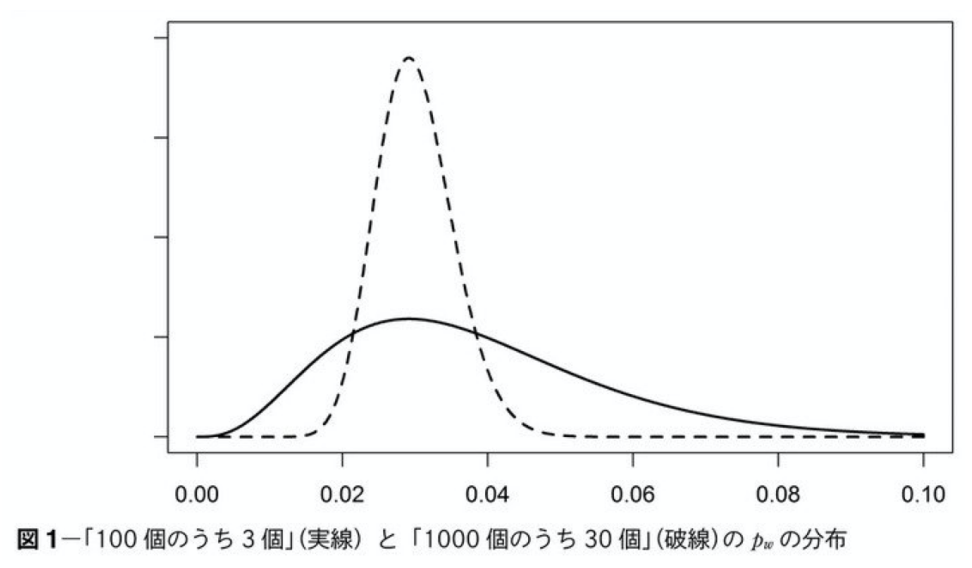

According to wiki, machine learning is defined as “a computer algorithm that automatically improves by learning from experience or its research area. It can be said that machine learning is a future technology that attempts to realize the same functionality in computers as the natural learning ability of humans. It is also an approach that has a philosophical aspect, as well as basic research that essentially considers “what is the ability to learn? In contrast, “machine learning,” a more engineering-oriented definition that focuses on data utilization, has been widely used in recent years. Machine learning is focused on the practical use of predicting unknown phenomena and making judgments based on such predictions by mathematically analyzing data characteristics and extracting rules and structures hidden in the data. In this engineering perspective, the basic process is to learn from a large amount of training data to find patterns and rules in things and phenomena. Learning from data in this way can be said to be the task of imitating and reproducing as well as possible the rules that generate the data when the training data are generated according to some rules. Machine learning does this by using the computing power of a computer. This is not simply storing the training data as it is, but clarifying (abstracting) the model (patterns and rules) behind it so that it can be judged when data other than the training data is input. This kind of work is called generalization. Various machine learning models have been proposed, ranging from very simple ones such as the linear regression model to deep learning with tens of thousands of parameters. What to use among them depends on the data to be observed (number of samples, characteristics, etc.) and the purpose of learning (whether it is just prediction or whether it is necessary to explain the results, etc.). The following is an example of a case where the model required for the above data and purpose differs. First, for the data, the following figure shows the probability distributions for the same percentage of correct answers with different numbers of samples. Both show the normal distribution of data where 3% of the answers are correct. The wavy line represents the case where there are 1000 samples and 30 correct answers, and the solid line represents the case where there are 100 samples and 3 correct answers. As can be seen from the above, when the number of samples is small, the base widens greatly, and the probability that other than the three correct answers are also correct increases. (The variability increases).

However, as shown above, when the number of training data is small, the variability of each parameter becomes large, and many uncertainties intervene. However, when there is little training data to begin with, as shown above, the variability of each parameter increases, and many uncertainties intervene, and the probability of choosing an incorrect answer increases due to the influence of the variability of each parameter. Therefore, when the number of samples is small, it is necessary to consider a model with fewer parameters, such as a sparse model, to find the answer. (In real life cases, the number of samples is more often small.)

Also, in cases where the purpose of the task is not to simply predict data, but to find out why the data is the way it is (e.g., where judgment is required on the predicted data), it is necessary to consider a white-box approach that uses a certain amount of carefully selected data, rather than a black-box approach that uses a large number of parameters. A white-box approach with some carefully selected data is required.

Putting all this together, we need to consider the following three issues in order to apply machine learning to real-world tasks.

Both show the normal distribution of data where 3% of the answers are correct. The wavy line represents the case where there are 1000 samples and 30 correct answers, and the solid line represents the case where there are 100 samples and 3 correct answers. As can be seen from the above, when the number of samples is small, the base widens greatly, and the probability that other than the three correct answers are also correct increases. (The variability increases).

However, as shown above, when the number of training data is small, the variability of each parameter becomes large, and many uncertainties intervene. However, when there is little training data to begin with, as shown above, the variability of each parameter increases, and many uncertainties intervene, and the probability of choosing an incorrect answer increases due to the influence of the variability of each parameter. Therefore, when the number of samples is small, it is necessary to consider a model with fewer parameters, such as a sparse model, to find the answer. (In real life cases, the number of samples is more often small.)

Also, in cases where the purpose of the task is not to simply predict data, but to find out why the data is the way it is (e.g., where judgment is required on the predicted data), it is necessary to consider a white-box approach that uses a certain amount of carefully selected data, rather than a black-box approach that uses a large number of parameters. A white-box approach with some carefully selected data is required.

Putting all this together, we need to consider the following three issues in order to apply machine learning to real-world tasks.

- How do you want to solve the problem (including the fundamental question of why the problem is occurring)?

- What kind of model should be applied to the problem (consideration of various models)

- How to compute those models using realistic computational methods (algorithms)

- regression : to find a function for predicting a continuous-valued output from a given input. Linear regression is the most basic regression. (It is called “linear regression” because the parameters are transformed by adding the input vectors together.

- Classification : A model in which the output is limited to a finite number of symbols. There are various algorithms, but as an example, logistic regression using sigmoid functions, etc., is used for the actual calculation.

- Clustering: A task that divides input data into K sets according to some criteria. Various models and algorithms are available.

- Linear dimensionality reduction : This task is performed to process high-dimensional data, which is common in real-world systems. Basically, it is based on linear algebra such as matrix calculations.

- Separation of time series data such as signal series : Tasks to predict the connection of data based on probabilistic models. It is also used as a task to recommend what will appear next or to interpolate missing data.

- Sequence pattern extraction : Learning sequence patterns of genes, workflows, etc.

- Deep learning : Tasks to learn thousands to hundreds of millions of parameters. It can be used to improve results in image processing, etc.

AIシステム設計・意思決定構造の設計を専門としています。

Ontology・DSL・Behavior Treeによる判断の外部化、マルチエージェント構築に取り組んでいます。

Specialized in AI system design and decision-making architecture.

Focused on externalizing decision logic using Ontology, DSL, and Behavior Trees, and building multi-agent systems.