Introduction

In the past, I implemented AI using Light DTM (Decision Trace Model) in two very different domains:

- A manufacturing environment

- An IT customer support operation

Interestingly, the AI systems themselves were almost identical.

They consisted of:

- LLM-based classification models (GPT/BERT) for intent and category detection

- Anomaly detection models (e.g., AutoEncoders) for deviation detection

- Rule-based + statistical models for scoring risk and importance

These components produced outputs such as:

- Classification

- Anomaly detection

- Scoring

In other words, they were all responsible for generating Signals using machine learning models.

From a technical perspective, the AI itself was essentially the same across domains.

The Key Observation

However, during implementation, I realized something critical.

Even though we used the same AI,

the final decisions were completely different.

In manufacturing:

→ stop_machine

→ human_review

The core decision was:

👉 Should we stop the machine?

In IT customer support:

→ human

else:

→ auto_reply

The core decision was:

👉 Should this be handled by a human or automated?

The models could:

- Detect anomalies

- Classify inputs

- Generate scores

But what was ultimately required was something else:

- Should we stop?

- Should we escalate to a human?

- Should we proceed automatically?

The Real Problem

At that moment, it became clear:

The problem was not AI.

The problem was decision-making.

And more importantly:

What differed across domains was what to prioritize when making decisions.

The Core Insight: Decision Priorities

Let’s take a closer look.

Manufacturing

The highest priority is safety.

Even if cost increases

Even if efficiency decreases

👉 Safety must always come first

Therefore:

- If there is an anomaly → stop

- If uncertain → involve a human

Because:

A wrong decision can lead to accidents

IT / Customer Support

The key priorities are:

- Speed of response (SLA)

- Customer experience

If everything is escalated to humans:

- Response time slows

- Customer satisfaction drops

Therefore:

- Automate what can be automated

- Escalate only critical cases

Because:

Mistakes lead to complaints and churn

What This Means

Even with the same AI:

👉 The decision structure changes completely depending on the domain

And the reason is:

👉 Each domain has different decision priorities

This Is the Essence of Decision

AI can:

- Classify

- Predict

- Score

But it does not decide:

👉 What should be prioritized

Designing that part is what we call:

Decision Design

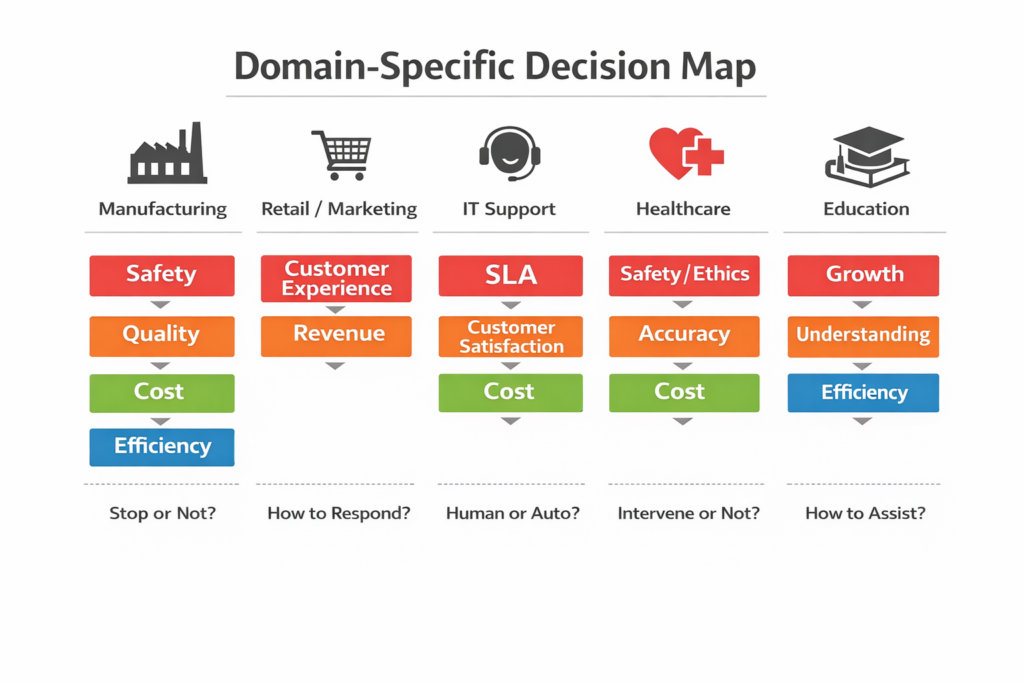

Decision Priorities by Domain

When we organize decisions through this lens, the differences become very clear.

The key point is:

Priorities don’t just differ

They directly shape the form of decisions

■ Manufacturing

👉 Decision: Should we stop?

■ Retail / Marketing

👉 Decision: How should we respond?

■ IT / Customer Support

👉 Decision: Human or automation?

■ Healthcare

👉 Decision: Should a human intervene?

■ Education

👉 Decision: What kind of support should be provided?

Summary

Across industries:

👉 What is prioritized is fundamentally different

And those priorities:

👉 Directly determine how decisions are made

What Light DTM Does

Light DTM takes a very simple approach:

It externalizes decision priorities

In traditional AI systems:

- Signals are generated

- But decisions remain implicit

As a result:

- Decisions vary by person

- Reasons are unclear

- Improvements are difficult

Light DTM fixes this by making it explicit.

For example, in manufacturing:

→ stop_machine

This is not just a rule.

It expresses:

“Safety is prioritized, so we stop when anomalies appear.”

In IT support:

→ human

This expresses:

“Critical cases should be handled by humans.”

What This Really Means

Light DTM is not about writing if statements.

It is about:

Extracting and structuring

what the domain values and prioritizes in decision-making

Importantly:

👉 You don’t need to change the models

You only need to define:

- Which signals to use

- Where to set thresholds

- When to involve humans

- When to stop

Then:

Decision-making shifts from implicit behavior

to explicit design

From Light DTM to Full DTM

Light DTM is just the beginning.

As systems evolve, simple rules are no longer enough.

You start needing:

- Multiple signals

- Sequential decisions

- Conditional flows

- Human approvals

- Context awareness

What Full DTM Enables

Full DTM expands decision-making into a structured system:

1. Multi-signal integration

Decisions based on multiple inputs, not a single score

2. Decision flow control

Order, branching, waiting, resuming

3. Explicit Human & Boundary layers

Who decides, when, and under what conditions

4. Integration of domain knowledge

Ontology-driven decision logic

5. Multi-agent perspectives

Different viewpoints (risk, cost, UX, etc.)

6. Decision traceability

Record, replay, and improve decisions

The Key Shift

Decisions become:

- Reproducible

- Auditable

- Continuously improvable

Relationship Between Light and Full DTM

Light DTM = Entry point

Full DTM = Scalable structure

You don’t need everything from the start.

But if you:

Externalize decisions early

You can expand naturally later.

We present below a comparison between the Light and Full configurations in a complaint inquiry use case.

Conclusion

Even with the same AI, outcomes differ.

Not because of model accuracy.

Not because of algorithms.

But because of:

What is prioritized in decision-making

AI can generate answers.

But it cannot:

- Decide

- Take responsibility

- Define priorities

Those belong outside the model.

Decision-making is not something AI does.

It is something we design.

And that design lives:

Not inside the model

But on our side

AIシステム設計・意思決定構造の設計を専門としています。

Ontology・DSL・Behavior Treeによる判断の外部化、マルチエージェント構築に取り組んでいます。

Specialized in AI system design and decision-making architecture.

Focused on externalizing decision logic using Ontology, DSL, and Behavior Trees, and building multi-agent systems.