Why Does AI Stop at the Field Level?

POCs succeed.

Yet AI stops in real-world operations.

What actually happens in practice

- The POC succeeds

- Accuracy is achieved

- The demo works

However:

- It is not used in production

- It eventually stops without notice

- No one takes responsibility

Common Failure Patterns

1. AI only predicts

Predictions are generated.

But there is no definition of what to do next.

→ Stuck at Signal (AI output)

2. Decision-making is a black box

No one understands why the result was produced.

- The reasoning cannot be explained

- Responsibility cannot be assigned

- Therefore, the field does not trust or use it

3. No clear ownership of responsibility

- “The AI said so”

- “A human made the decision”

→ Responsibility becomes ambiguous

4. Cannot be integrated into systems

It does not connect to operational workflows.

- It is not defined as a decision

- It cannot be translated into execution

→ Therefore, it is not used

The Core Issue

This is not a problem of AI itself.

The real issue is:

The structure of decision-making does not exist.

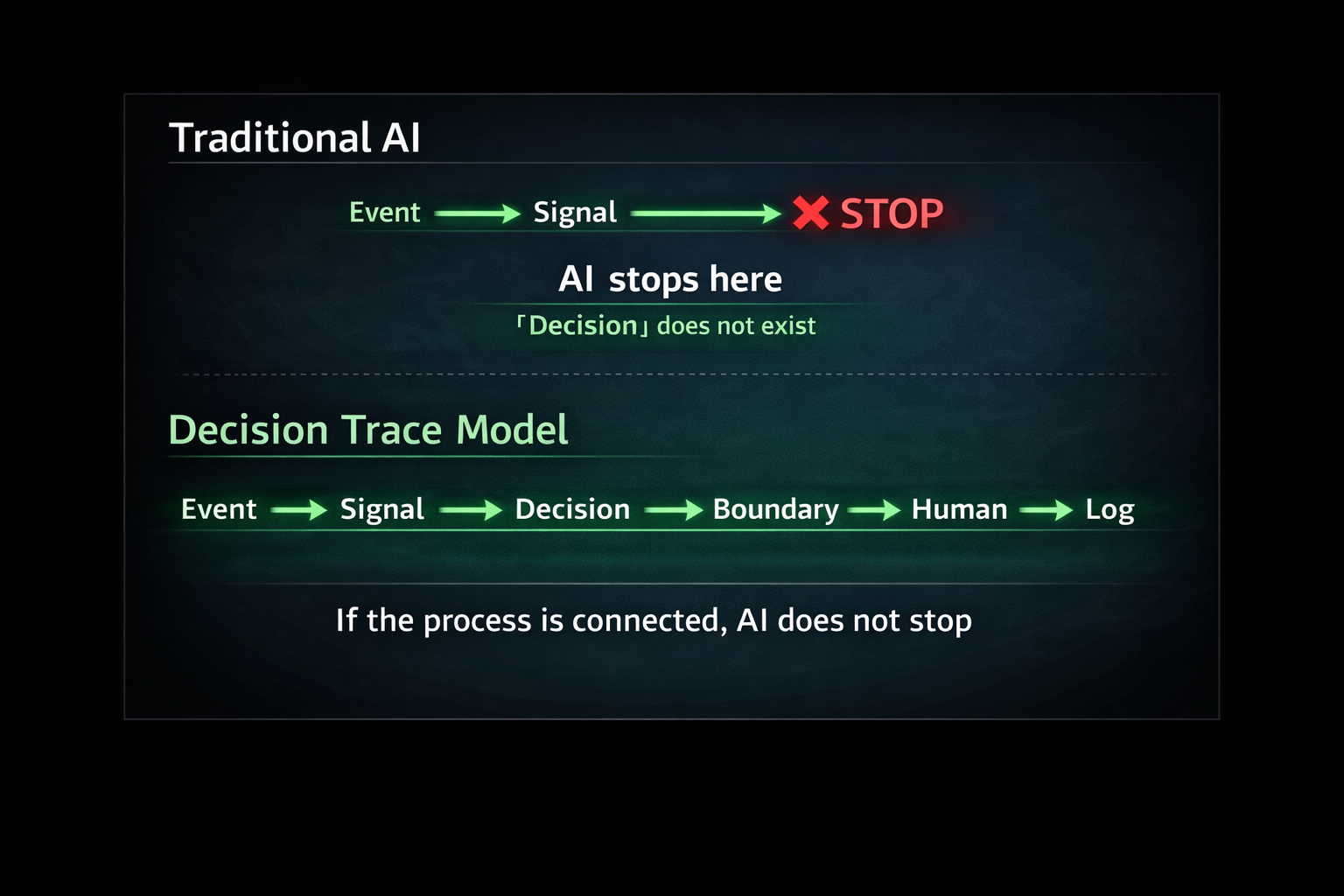

How decisions actually work in AI system

Decision-making inherently follows this flow:

Event → Signal → Decision → Boundary → Human → Log

- Event: What happened

- Signal: Prediction (e.g., AI output)

- Decision: What to do

- Boundary: Constraints and policies

- Human: Human involvement

- Log: Record of the decision

In simple terms:

- Event: What happened

(e.g., a user viewed a product) - Signal: AI prediction

(e.g., 70% probability of purchase) - Decision: What to do

(e.g., whether to offer a discount)

This is how decisions should be structured and executed in practice.

Absence of Decision Structure — Why the Same AI Produces Different Outcomes

AI can perform predictions and classifications.

However, what is actually required in real-world operations is deciding what to do based on those results.

- Under what conditions should it be executed?

- What should be prioritized?

- When should the process be stopped?

- When should it be handed over to a human?

If these decisions are not explicitly defined,

the output of AI remains at the level of a Signal,

and cannot be translated into real-world actions.

More importantly,

these decisions differ across domains.

For example:

- In manufacturing: safety, downtime avoidance, and quality

- In finance: risk, regulation, and accountability

- In healthcare: urgency, safety, and ethics

- In retail: revenue, customer experience, and opportunity loss

These priorities shape how decisions are made.

Therefore,

even if the same Signal is produced,

the resulting Decision will differ.

In other words,

one of the reasons AI systems fail in real-world deployment is that

the invisible design of decision priorities is not explicitly defined.

→ Related article:

Why the Same AI Produces Different Outcomes — The Invisible Design of “Decision Priorities”

Learn More

AI and Theories of Intelligence

- Should AI Aim for the “Ultimate Intelligence”? Intelligence Field and the Redesign of the Conditions for Social Existence

AI from a Mathematical Perspective

Reframing the Structure

- What Is a Decision System? — From AI Prediction to Decision Systems

- Structure Changes the World — Making Information Usable

- Structure Creates Culture — From Bauhaus to Design in the Age of AI

Decision-Making in a World Without Data

Dive deeper into why AI stops

See related articles

The Limits and Discontinuities of AI

- Smooth computation cannot represent decision-making — The problem of discontinuity in AI

- The numbers e and π — for smoothly computing the world

- AI becomes “intelligent” by not deciding — Gradients, probabilities, and deferral as a stance, and designing systems that do not rush decisions

- Probability is not for reducing uncertainty — The true role of probability and the concept of “designing to preserve ambiguity”

- Graph Neural Networks: A Technology Between Continuous Approximation and Semantic Discontinuity — Can AI Get Closer to Meaning?

Decision, Responsibility, and the Role of Humans

- Where do humans remain? — Rethinking Human-in-the-loop

- Why Human-in-the-loop fails in practice — Why “humans at the end” lose responsibility, and the shift toward Human-as-Author

- Why AI cannot bear responsibility — The inseparability of decision and responsibility, and the necessity of externalizing logic

- Whose values define the objective function? — Implicit decisions embedded in scores, and how to handle values humans did not explicitly define

Optimization, Evaluation, and Runaway Systems

- What happens when optimization runs out of control — When objective functions distort reality, and how to design around Goodhart’s Law

- Why Explainable AI is not truly explanatory — The difference between explanation and justification, and the division of roles between logic and accountability

- Exceptions are not failures — How to incorporate exception handling into system design, and why systems with more exceptions can be healthier

Common Sense, Trust, and Social Implementation

- Why AI cannot possess “common sense” — Common sense as a collection of discontinuities, and as ontology

- Designing staged trust scores as “contracts” — Treating trust not as a learned outcome, but as a revocable boundary

Reframing AI as an Industry

- AI Factory Model — AI is not software, but becomes a form of manufacturing

- AI Quality Engineering — Why quality engineering becomes critical again in the age of AI

Decision Trace Model

Architecture

Use Case